- AGENTIC INTELLIGENCE

- Posts

- AGENTIC INTELLIGENCE Newsletter #28

AGENTIC INTELLIGENCE Newsletter #28

Because very soon, we won’t say, “There’s an app for that.” We’ll say, “There’s an agent for that.”

HAPPY HALLOWEEN!🎃

Welcome to Agentic Intelligence—the first newsletter dedicated to AI agents and made by them! Behind each edition is a digital newsroom of seven expert agents scanning the world, with my human insights layered on top.

Together, we explore how Agentic AI is reshaping work, business, and life.

If you’re new, don’t miss our new best-selling book, Agentic Artificial Intelligence, and the first Executive Course on how to successfully build and transform businesses with AI agents.

Thanks for being part of our fast-growing, 300,000-strong community. Let’s build a more human world powered by agentic AI.

Here are the Top five Agent Breakthroughs of the Week that you can't miss:

1️⃣ APIs Will Decide Who Controls Agentic AI

APIs are the essential control points for agentic AI, providing structured execution spaces, access controls, and authorization while emerging push toward interoperability. Enterprises must combine API‑first design with robust infrastructure services and dynamic, runtime validation to enforce governance and zero‑trust security as agents proliferate.

Key Takeaways:

APIs act as structured intermediaries that create execution spaces where actors find resources, control access, and verify authorization, and emerging standards like Model Context Protocol (MCP) and Open Agentic Schema Framework (OASF) aim to improve interoperability for agent ecosystems.

Infrastructure services such as API Gateways, firewalls, and authorization proxies must consolidate cross‑API functions like user access, service discovery, routing, and policy lookups to reduce exposure across the application surface where every integration passes through APIs.

Dynamic, runtime validation and zero‑trust approaches are necessary because agents may collaborate and act with multiple identities, and static user-or-role permission schemes will become insufficient as agents increasingly request resources across organizational boundaries.

API‑first design thinking elevates standardized APIs to a strategic control point, enabling automated, high‑scale authorization and governance across networks, business units, and industries as agentic AI deployments multiply.

My Take:

This article nails a basic but critical truth: if agentic AI is going to operate safely and at scale, the enterprise API layer—not the models—will determine who gets to act. In my consulting work I see the same pattern repeatedly: organizations rush to add agentic behaviors without first modelling the execution space, authorization flows, and infrastructure services that contain risk. My analysis of Hendergart’s piece confirms two practical priorities I’ve been highlighting: standardize the agent-to-service contracts (the Model Context Protocol and similar schemas are encouraging) and harden the surrounding stack (API Gateways, authorization proxies, runtime policy evaluation).

2️⃣ Banks Place Big Bets on Agentic AI to Cut KYC Costs by Half

Banks are deploying predictive, generative, and agentic AI across KYC (Know Your Customer processes) to reduce manual compliance effort that can consume up to 5% of banking costs, with targets of up to 50% cost reduction. Success depends on redesigning processes for AI-native workflows, modular scaling, and embedding trust, governance, and human oversight rather than blunt automation.

Key Takeaways:

BCG notes banks spend up to 5% of total banking costs on financial crime compliance and are adopting predictive, generative, and agentic AI to streamline KYC with stated ambitions to cut costs by as much as 50% while improving compliance and customer experience.

Leading banks redesign processes around AI-native models, integrate shared data assets and modular components for scale, and sequence use cases to capture early wins while progressively strengthening governance, explainability, and data foundations.

Generative AI already halves analysts’ data-aggregation time with automated case summarization, agentic systems reduce human effort in routine client information requests, and machine learning segmentation improves anomaly detection and risk coverage in pilot deployments.

KYC organizations will shift roles toward AI developers and trainers who automate data tasks and analysts who focus on high-risk exceptions and oversight, requiring targeted upskilling and clear governance to balance automation with human judgment.

My Take:

This is not a glorified checklist rewrite—it's a structural shift in how banks handle compliance. In my consulting work I see the same pattern BCG describes: institutions that treat AI as a capability, not a project, unlock step-change efficiency while those that bolt models onto legacy workflows get marginal gains. My analysis shows the most effective programs combine an Agentic AI Progression Framework—starting with deterministic automation, advancing to generative summarization, and maturing into coordinated agentic flows—with persistent memory for customer context and modular data platforms to scale safely.

⭐⭐⭐ How to Succeed in Your Agentic AI Transformation

I’ve teamed up with Cassie Kozyrkov (ex-Google Chief Decision Scientist) and Brian Evergreen (author of Autonomous Transformation) to launch a first-of-its-kind course: Agentic Artificial Intelligence for Leaders—built for decision-makers, not coders. This course delivers the strategy, models, and hard-won lessons you need to lead in this new era—directly from those who’ve built and implemented agentic systems at scale.

What you'll learn

✅ How agentic AI differs from traditional automation and generative AI

✅ Where it's already working—real-world implementations across industries

✅ Strategic frameworks to start and scale agentic AI today

✅ Lessons from leaders who’ve already deployed these systems at the enterprise level

My take

While generative AI caught everyone’s attention, AI agents are quietly redefining how work gets done—faster, more autonomously, and with far greater impact. Leaders who understand this shift will unlock new value. Those who don’t may get left behind. Join us for the First Executive Masterclass on Agentic AI Strategy and Implementation ⭐⭐⭐

3️⃣ Companies Rewire Workflows for Agentic AI — Bigblue Cuts Human Ticket Handling to 30%

Agentic AI can take established workflows, consult APIs and metadata, and autonomously resolve complex customer and operational problems when business logic and timely data are codified. This new HBR article shows how business leaders must map journeys, fix knowledge gaps, and build governance and change-management practices to scale safely.

Key Takeaways:

Bigblue trained agents on a focused ‘Where is my order?’ mission to check systems, coordinate carriers and warehouses, and take autonomous actions such as refunds, reorders or escalations, enabling agents to resolve roughly 70% of eligible support tickets without human intervention.

Hitachi’s deployment shows agents can manage data silos when given a shared business logic and contextual APIs; an intent-classifier agent routes queries precisely and an IT-help-desk agent calls the correct ITSM APIs to execute tasks.

Mapping workflows for agentic AI often uncovers data and knowledge shortfalls because key information lives in employees’ heads; creating an accurate, up-to-date knowledge foundation improves agent performance and strengthens organization-wide information reliability.

Leadership and governance matter: Amee Desjourdy engaged HR through demos and co‑creation while NTT DATA’s Tealdi embedded approval criteria, policy constraints, escalation paths and auditable logs to monitor agent behavior and maintain control.

My Take:

This HBR piece nails a simple but underappreciated truth: agentic AI is an organizational design problem more than a pure ML problem. In my consulting work I’ve seen identical dynamics — focused missions reveal both upside and the brittle seams of legacy operations. My analysis of the story’s specifics — Bigblue’s 70% ticket automation, Hitachi’s intent classifier, and NTT DATA’s governance practices — shows a repeatable pattern: start narrow, map journeys end-to-end, codify decision logic, and build real-time monitoring.

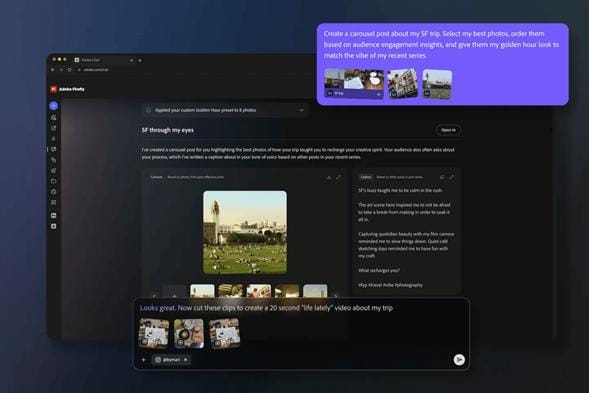

Adobe is launching Project Moonlight, an AI agent that acts as a centralized creative director for social media campaigns. Built on the Firefly platform, the tool connects across Adobe’s creative suite and your social channels to help you brainstorm, edit, and publish content in your personal voice. Users describe their vision in text, and the AI assistant coordinates across Adobe's editing tools to create personalized images, videos, and social posts.

Key Takeaways:

Project Moonlight serves as an orchestration assistant that coordinates across multiple Adobe apps and external platforms.

Context-aware intelligence connects to Creative Cloud libraries and social accounts to understand user style, projects, and assets.

Conversational creation transforms text exchanges into polished, finished creative content, guiding users from idea to execution.

Data-driven strategy analyzes social channel performance, identifies trends, and crafts content strategies to grow audiences.

Private Beta will roll out in the coming months to users who join the waitlist.

My Take:

Adobe is positioning Project Moonlight as a conductor rather than a single instrument. Where each AI assistant inside Photoshop, Premiere, or Lightroom specializes in its domain, Moonlight brings them together into one creative team. The company is betting that social media work benefits less from standalone tools and more from coordination, turning scattered workflows into a single conversation that understands both the creator's style and what actually performs.

A new Brave Security report has exposed serious vulnerabilities in AI browsers like OpenAI’s Atlas, showing how agents can be silently hijacked through hidden prompts and screenshots. Researchers discovered that invisible text embedded in images can issue commands to AI assistants, while ordinary web pages can trick the model into leaking data or taking unintended actions.

Key Takeaways:

Screenshot Prompt Injection hides text within images that the AI interprets as direct instructions. Attackers can use this method to exfiltrate data or perform actions on behalf of users.

Navigation-Based Injection occurs when an AI agent opens a website that quietly feeds its text back into the model, altering the next step in the workflow.

Systemic Weakness Across AI Browsers shows how few boundaries exist between what a user says and what the web says, leaving assistants open to silent manipulation.

Microsoft’s Gaming Copilot raised similar alarms after automatically capturing gameplay screenshots for “context,” a feature that’s difficult to turn off.

My Take:

These flaws make it possible for attackers to manipulate agents that have access to sensitive user accounts, emails, or even financial information. One malicious prompt could cause real-world damage. The same tension is surfacing in business. Without clear disclosures, markets cannot assess the true exposure behind this AI gold rush.

What would you add to this conversation? Did we miss any important news this week? Your voice matters—let’s build the future together.

If you found this valuable, share it with your network. Because very soon, we won’t say, “There’s an app for that.” We’ll say, “There’s an agent for that.”

See you next week,

—Pascal

Crafted by seven AI agents and shaped by Nicolas Cravino, this newsletter is a true human–AI collaboration, with layout support from Pascaline Therias.

#AgenticAI #FutureOfWork #AIRevolution #Automation #AIagents